Pangram’s $4M Raise Fuels AI-Driven Deepfake Defense

Pangram, a startup founded by former Tesla and Google engineers, has secured $4 million in seed funding to advance artificial intelligence-powered text detection technology as educational institutions and businesses grapple with an unprecedented surge in deepfake threats. The funding round, led by California-based venture capital firm ScOp with participation from Script Capital and Cadenza, positions the company to address what has become a critical cybersecurity challenge across multiple sectors.

The urgency of Pangram’s mission reflects the dramatic escalation of AI-generated content threats, with deepfake fraud attempts surging by 3,000% in 2023 and over 96% of fraud cases now involving some form of deepfake technology. This funding injection arrives at a pivotal moment when the cost to create convincing deepfakes has plummeted from over $1,000 in 2019 to approximately $300 in 2025, democratizing access to sophisticated deception tools.

The Expanding Deepfake Threat Landscape

Financial Sector Under Siege

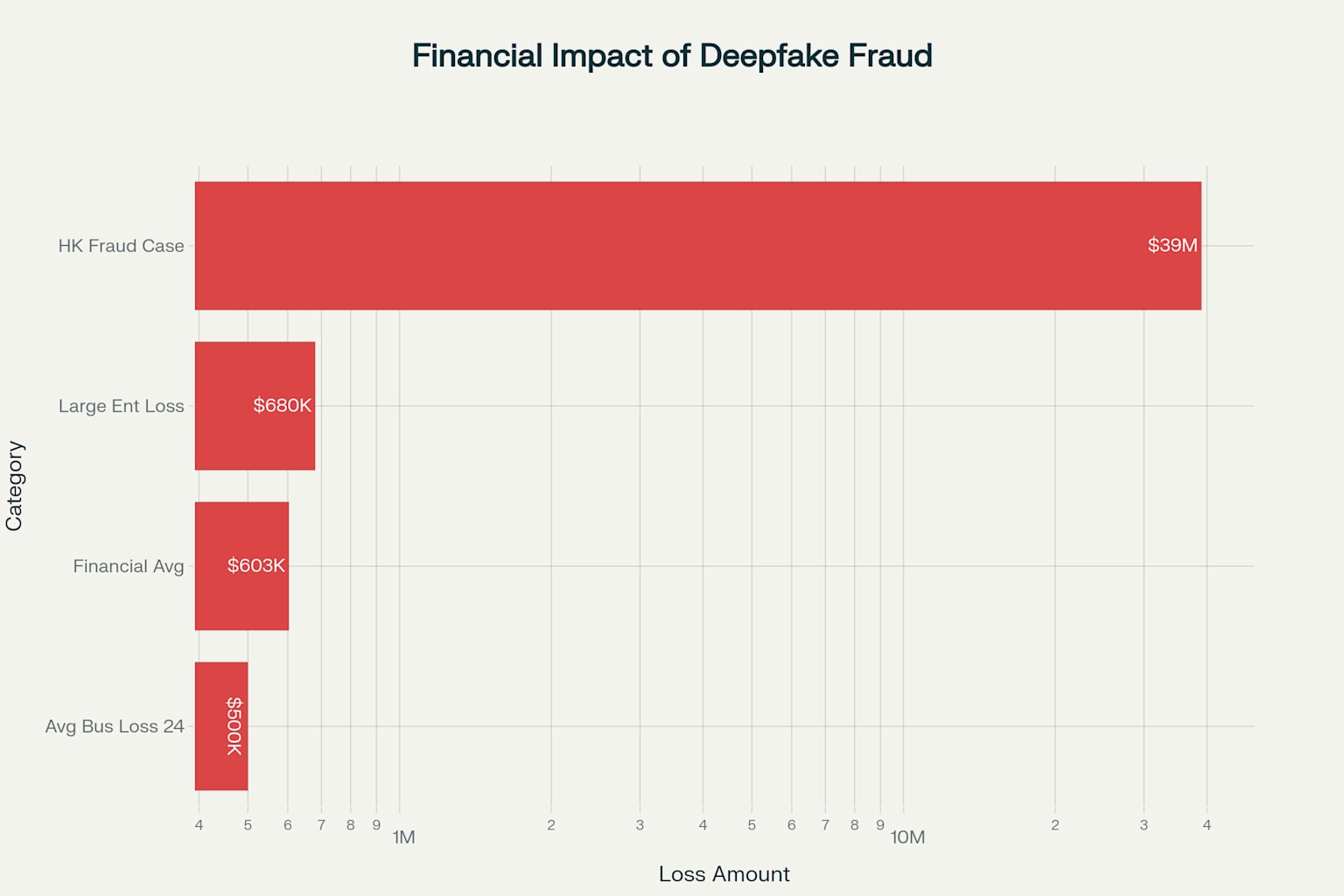

The financial impact of deepfake fraud has reached alarming proportions across industries, with businesses facing average losses of nearly $500,000 per incident in 2024. The financial services sector bears the heaviest burden, experiencing average losses exceeding $603,000 per company, with 23% of fintech organizations reporting losses over $1 million. The most dramatic single incident occurred in Hong Kong, where a finance worker transferred $39 million to fraudsters using deepfake technology to impersonate company executives during a video conference call.

The sophistication of these attacks has evolved beyond simple audio manipulation. The Hong Kong case involved fraudsters creating digital replicas of multiple employees who appeared to participate authentically in a multi-person video conference, demonstrating the advanced capabilities now available to criminal actors. This incident marked the first known case where victims were deceived in a multi-person video conference setting, establishing a new paradigm for corporate fraud.

Educational Institutions Face Digital Deception

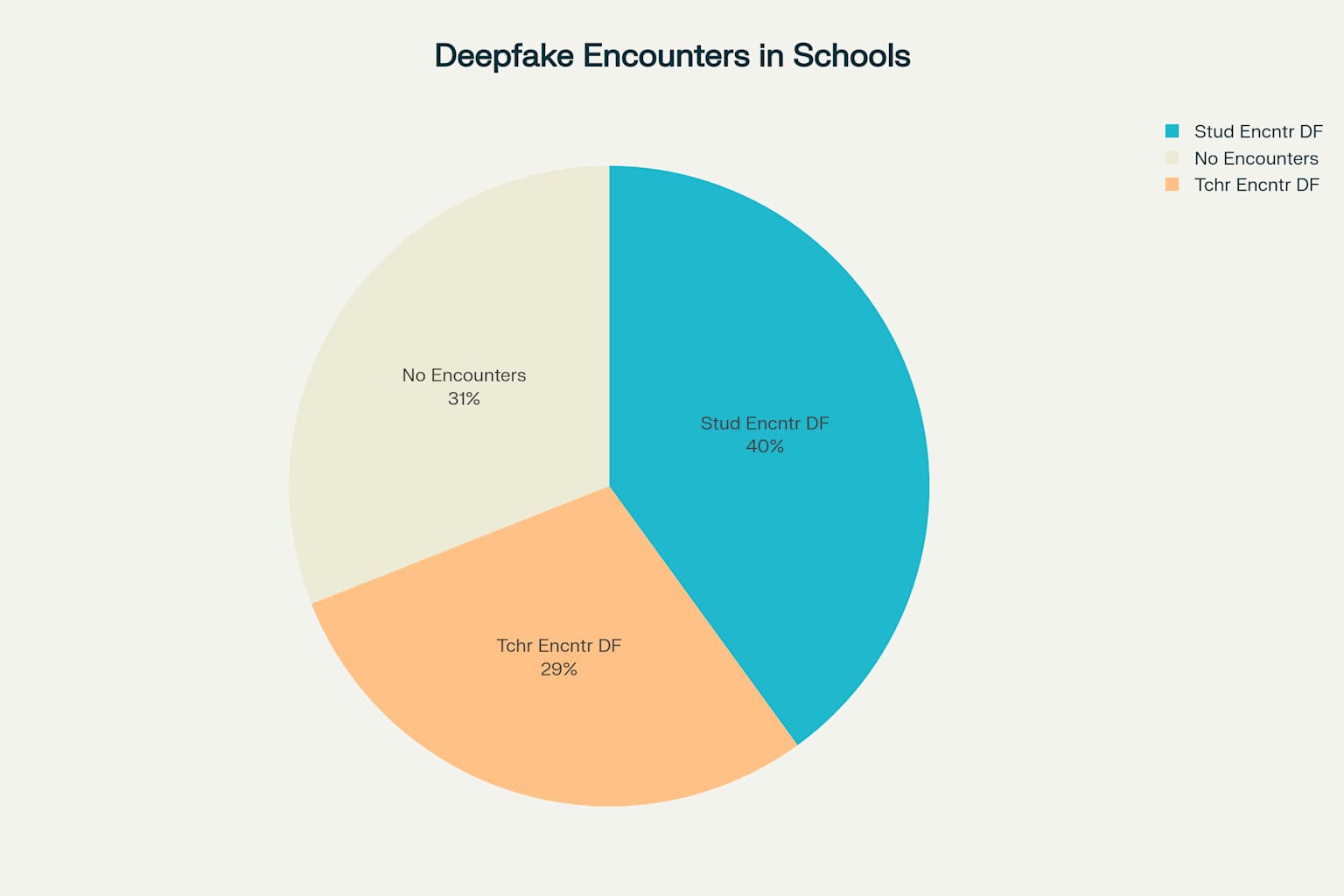

Educational settings have become ground zero for deepfake misuse, with 40% of students and 29% of teachers reporting encounters with deepfakes during the 2023-24 school year. The scope of educational deepfake incidents extends from students creating fake pornographic images of classmates to more sophisticated attacks targeting educators and administrators.

The Pikesville High School case in Baltimore exemplifies the severity of educational deepfake threats. Athletic director Dazhon Darien used artificial intelligence to create a racist audio recording impersonating Principal Eric Eiswert, resulting in death threats against the principal and significant disruption to the school community. The incident demonstrates how deepfakes can weaponize institutional authority and destroy reputations within educational environments.

Despite the prevalence of these incidents, only 19% of students report that their schools have explained what deepfakes are, revealing a critical gap in digital literacy education. This educational deficit occurs against a backdrop where human detection accuracy for deepfake content averages just 62% for images and 24.5% for videos, highlighting the inadequacy of manual detection methods.

Market Dynamics and Growth Trajectory

Explosive Market Expansion

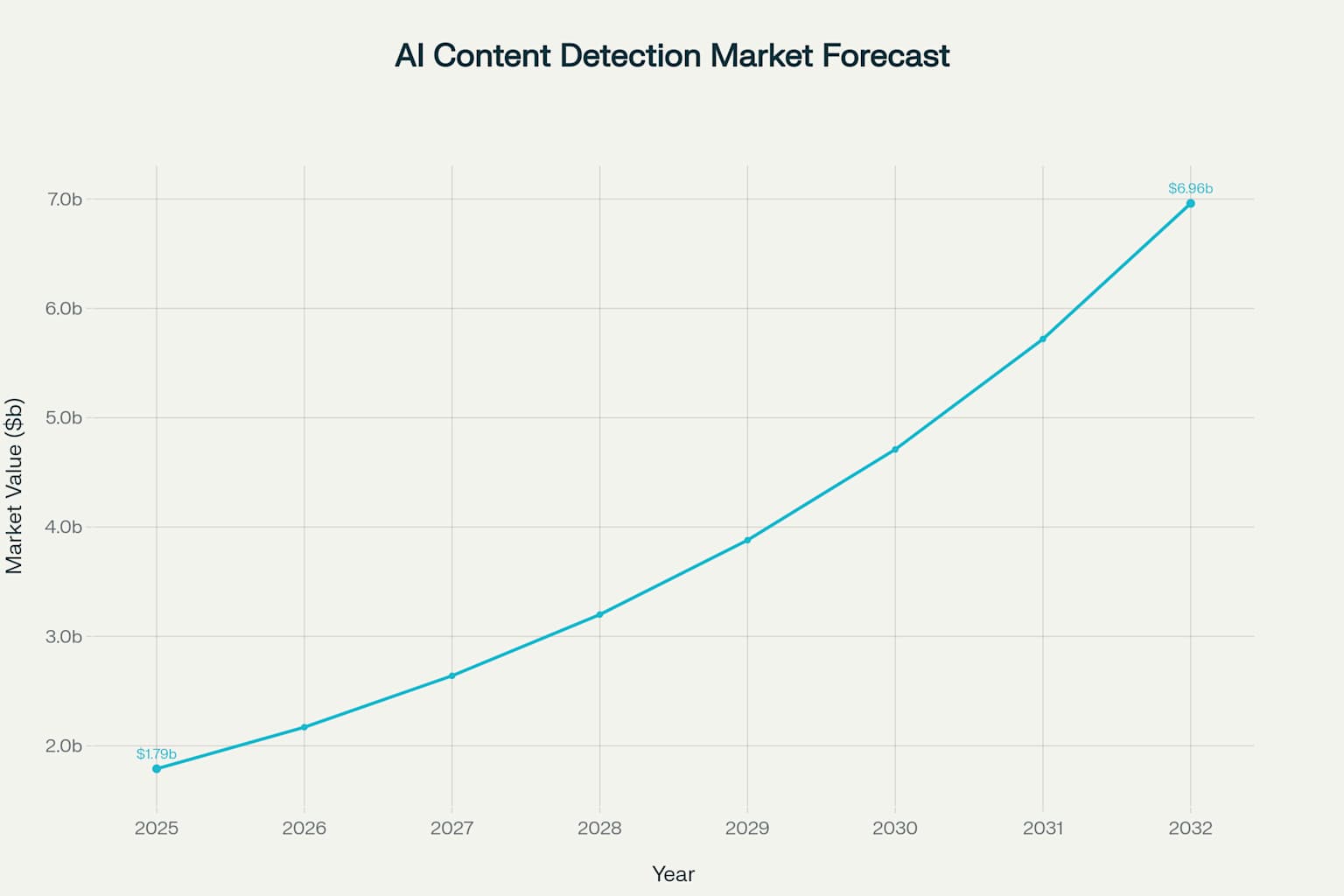

The AI content detection software market is experiencing unprecedented growth, valued at $1.79 billion in 2025 and projected to reach $6.96 billion by 2032, representing a compound annual growth rate of 21.4%. This expansion reflects the broader AI detection tools market, which grew from $4.5 billion in 2023 and is expected to reach $15.7 billion by 2033.

The deepfake detection segment specifically is projected to reach $1.2 billion by 2026, driven by increasing regulatory compliance requirements and the surge in deepfake-related security concerns. North America accounts for 42.5% of global market revenue in 2025, while the Asia-Pacific region posts the fastest growth at 25% compound annual growth rate, fueled by educational and media sector adoption in India and China.

Competitive Landscape and Technical Differentiation

Pangram enters a competitive market that includes established players such as Turnitin, GPTZero, Microsoft, Google, and specialized deepfake detection companies like Reality Defender and Attestiv. However, the company’s technical approach distinguishes it from competitors through several key innovations.

The startup’s detection system leverages active learning algorithms and a training corpus of 100 million human documents, enabling it to perform subtle pattern recognition across diverse writing styles and domains. This approach contrasts with specialized detection models that focus on specific text types, allowing Pangram to achieve broader generalization capabilities.

Independent research from the University of Maryland and Microsoft validates Pangram’s technical superiority, demonstrating that it is the only AI detection system capable of outperforming trained human experts at identifying AI-generated content. The system achieves 100% accuracy in detecting outputs from OpenAI’s most advanced o1-pro model and maintains 96.7% accuracy on “humanized” content designed to evade detection.

Technical Innovation and Methodology

Active Learning and Mirror Prompting

Pangram’s technological advantage stems from its implementation of active learning algorithms that iteratively improve detection capabilities by focusing on the most challenging examples. This methodology employs hard negative mining, which identifies examples with the highest error rates and generates AI samples that closely resemble human writing, then retrains the model until errors are eliminated.

The company’s synthetic mirror prompting technique creates comprehensive training datasets by using an exhaustive library of prompts across all major open and closed-source AI models. This approach ensures the detection system remains current with evolving language model capabilities and can generalize to new AI systems before they achieve widespread adoption.

Scalability and Cost Efficiency

Pangram’s use of open-source models for infrastructure reduces computing costs while maintaining high accuracy levels, providing a competitive advantage over solutions that rely on proprietary, expensive computing resources. The company’s data pipeline is designed for scale, enabling continuous integration of new language models and prompt variations as they emerge.

The system supports detection across 20 languages, including Arabic, Japanese, Korean, and Hindi, addressing the global nature of deepfake threats. This multilingual capability positions Pangram to serve international markets where deepfake detection tools remain limited.

Commercial Applications and Client Adoption

Enterprise and Platform Integration

Pangram’s client base includes prominent platforms such as Quora and NewsGuard, demonstrating real-world application across diverse content environments. The company offers flexible pricing structures, with individual plans starting at $15 per month for up to 600 scans, professional plans at $45 monthly, and enterprise solutions for large-scale implementations.

The startup’s Chrome extension provides real-time detection capabilities, allowing users to highlight text and immediately assess AI authorship. This accessibility democratizes deepfake detection capabilities beyond institutional users to individual consumers and small businesses.

Educational Sector Applications

In educational contexts, Pangram’s technology addresses the growing challenge of AI-assisted academic dishonesty while maintaining accuracy standards critical for fair assessment. The system’s ability to identify mixed human-AI content provides educators with nuanced insights into student work, distinguishing between complete AI generation and AI-assisted writing.

The platform’s low false positive rate addresses a critical concern in educational settings, where incorrect AI detection accusations can have serious consequences for students. This reliability factor differentiates Pangram from earlier detection tools that suffered from higher error rates.

Funding Impact and Strategic Positioning

Investment Significance and Validation

The $4 million funding round, which includes $2.7 million in new investments adding to a previous $1.25 million pre-seed round, provides Pangram with resources to expand its eight-person team and develop consumer-focused products. Lead investor ScOp’s participation signals confidence in the company’s technical approach and market positioning.

The funding enables Pangram to accelerate development of its active learning systems and expand its training corpus, maintaining competitive advantages as language models continue to evolve. This investment also supports the company’s goal of staying ahead of emerging AI threats through continuous model updates and dataset expansion.

Strategic Market Entry

Pangram’s entry into the AI detection market occurs during a period of heightened awareness about deepfake threats and increasing regulatory attention to AI-generated content. The recent ruling by the U.S. Copyright Office that AI-generated content cannot be copyrighted creates additional demand for reliable detection tools across media and publishing industries.

The company’s focus on both educational and business applications positions it to capture market share across multiple high-growth segments. This dual-market approach provides revenue diversification and reduces dependence on any single sector’s adoption patterns.

Implications for Educational and Business Environments

Transforming Educational Integrity

Pangram’s technology arrival addresses fundamental challenges in maintaining academic integrity as AI writing tools become ubiquitous in educational settings. The system’s ability to detect increasingly sophisticated AI-generated content helps educators distinguish between legitimate AI assistance and academic dishonesty.

The platform’s explanatory capabilities, which identify specific AI-generated phrases and patterns, provide educational value beyond simple detection. This functionality supports digital literacy initiatives by helping students and educators understand AI writing characteristics and develop critical evaluation skills.

Corporate Security Enhancement

For business environments, Pangram’s detection capabilities address multiple threat vectors beyond traditional deepfake fraud. The system’s ability to identify AI-generated communications helps organizations verify the authenticity of internal and external correspondence, protecting against social engineering attacks that leverage AI-generated content.

The technology’s real-time processing capabilities enable integration into existing security workflows, providing automated screening of emails, documents, and other text-based communications. This automation reduces the burden on security teams while improving detection accuracy compared to manual review processes.

Future Market Evolution and Challenges

Technological Arms Race

The ongoing development of more sophisticated language models presents both challenges and opportunities for detection technology companies like Pangram. While newer AI models become more human-like in their output, Pangram’s research indicates that advanced models can sometimes be easier to detect due to their distinctive patterns.

The company’s continuous learning approach, which incorporates new language models into training datasets as they are released, provides a sustainable method for maintaining detection accuracy. This proactive strategy contrasts with reactive approaches that struggle to keep pace with AI model evolution.

Regulatory and Compliance Landscape

Increasing government attention to AI-generated content and deepfake threats is likely to drive mandatory detection requirements across industries. Educational institutions face pressure to implement comprehensive AI detection policies, while businesses in regulated sectors may be required to demonstrate content authenticity capabilities.

Pangram’s established accuracy metrics and third-party validation position the company to meet emerging compliance requirements. The system’s ability to provide detailed analysis and documentation of detection decisions supports audit and regulatory reporting needs.

Conclusion

Pangram’s $4 million funding round represents a significant milestone in the evolution of AI-driven deepfake defense technology, addressing critical vulnerabilities across educational and business environments. The company’s technical innovations in active learning and large-scale training data processing have produced the first detection system capable of outperforming human experts, establishing a new benchmark for accuracy in an increasingly sophisticated threat landscape.

The convergence of multiple factors—explosive growth in deepfake incidents, substantial financial losses across industries, widespread educational institution vulnerability, and rapid market expansion—creates an environment where Pangram’s technology addresses urgent, measurable needs. The company’s ability to achieve 100% accuracy on the most advanced AI models while maintaining cost-effective operations through open-source infrastructure provides a sustainable competitive advantage.

As deepfake threats continue to evolve and proliferate, Pangram’s funding success signals investor confidence in technical solutions that can adapt to emerging challenges. The startup’s dual focus on educational integrity and corporate security positions it to capture significant market share in a sector projected to grow nearly four-fold over the next seven years. The implications extend beyond immediate fraud prevention to encompass fundamental questions about digital authenticity, institutional trust, and the preservation of human authorship in an AI-saturated information environment.